SixTrack was developed by Frank Schmidt of the CERN Accelerators and Beams Department, based on an earlier program developed at DESY, the German Electron Synchrotron in Hamburg. SixTrack produces results that are essential for verifying the long term stability of the high energy particles in the LHC. Lyn Evans, head of the LHC project, stated that "the results from SixTrack are really making a difference, providing us with new insights into how the LHC will perform".

Particle motion in accelerator is at least four-dimensional, the coordinates being x (horizontal displacement), y (vertical displacement) and the two conjugate momenta. The Figure shows an example of motion in the vicinity of a stable fix-line (thin line), stable motion around the fix-line (small torus) and motion just outside the resonance structure (large torus). The color (rainbow scale) indicates the fourth coordinate allowing for a 3D visualization of particle motion in the four dimensional phase space.

By repeating such calculations thousands of times, it is possible to map out the conditions under which the beam should be stable.

Physical space image of a particle for a stable orbit. Each point represents the particle position after one turn around the accelerator. For the stable orbits, only a limited part of the entire phase space is explored by the particle trajectory.

Physical space image of a particle for an unstable orbit. Each point represents the particle position after one turn around the accelerator. For unstable orbits, points tend to fill out the entire phase space. At some point, unstable particles completely leave their orbit and they are lost (on the vacuum pipe of the accelerator).

Technical challenges

Important parameters

In the LHC the energy available in the collisions between the protons will reach 14 TeV range, that is about 10 times that of past and existing accelerators. But "energy" alone is not enough. To guarantee an "effective physics programme", i.e. to make possible all the discoveries that the LHC is planning to achieve, there is another very important parameter to consider: the "luminosity".

The "luminosity" of a collider is a quantity proportional to the number of collisions per second. Whereas in past and present colliders the luminosity culminates around L = 1032cm-2 s-1, in the LHC it will have to reach L = 1034cm-2 s-1. This will be achieved by filling each of the two rings with 2808 bunches of 1.15×1011 particles each.

These unprecedented energy and luminosity values impose new and stringent requirements on the machine design and performances.

Some disturbing effects

While circulating around the LHC 11,245 times a second, a number of effects contribute to dilute the proton beams by increasing their size and hence degrade the luminosity. Let's see the main ones and how CERN scientists are trying to cure them.

Non-linear and chaotic motion

Tiny spurious non linear components of the guiding and focusing magnetic-fields of the machine can make the motion slightly chaotic, so that after a large number of turns the particles may be lost. In the LHC the destabilizing effects of magnetic imperfections is more pronounced at injection energy, because the imperfections are larger and a because the beams occupy a larger fraction of the coil cross section. There are two ways to cure this:

- We must evaluate the Dynamic Aperture (i.e., the region in the phase space of the beam within which particles remain stable for a given time) and make sure that it exceeds the dimension of the beam with a sufficient safety margin (ideally it should exceed the dimension of the vacuum pipe).

- For the time being, no theory can predict with sufficient accuracy the long term behavior of particles in non linear fields. Instead we use fast computers to track hundreds of particles step by step through the thousands LHC magnets for up to a million turns. Results are used to define tolerances for the quality of the magnets at the design stage and during production.

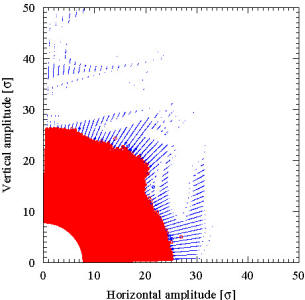

The red area represents initial conditions that are stable up to 100,000 turns around the LHC. The blue circles represent unstable initial conditions: their radius is proportional to the stability time |

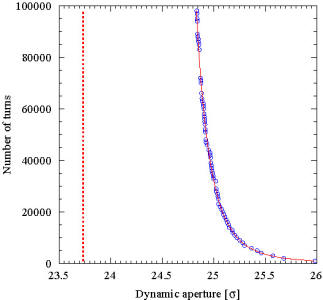

The circles represent the variation of the radius of the dynamic aperture with time. The dashed red curve represents the asymptotic value. |

|||

These plots have been obtained using the measured magnetic field errors to simulate the beam dynamics in the LHC machine as installed. They refer to the dynamics at 3.5 TeV, prior to perform any optics change from the one used at injection and that used for collision. The large dynamic aperture is explained by the small beam size and the excellent field quality of the main dipoles. |

||||

Beam-beam effect

When two bunches cross in the center of a physics detector only a tiny fraction of the particles collide head-on to produce the wanted events. All the others are deflected by the strong electromagnetic field of the opposing bunch. These deflections, which are stronger for denser bunches, accumulate turn after turn and may eventually lead to particle loss. This beam-beam effect was studied in previous colliders, where experience showed that one cannot increase the bunch density beyond a certain beam-beam limit to preserve a sufficiently long beam lifetime. In order to reach the desired luminosity the LHC has to operate as close as possible to this limit.

Collective instabilities

While travelling down the 26.658 km long LHC beam pipe at a speed close to the speed of light, each of the 2808 proton bunches leaves behind an electromagnetic wake-field which perturbs the succeeding bunches and can lead to beam loss. These collective instabilities can be severe in the LHC because of the large beam current needed to provide high luminosity. Their effect is minimized by a careful control of the electromagnetic properties of the elements surrounding the beam, and by sophisticated feedback systems.

Quenches

Despite all precautions the beam lifetime will not be infinite, in other words a fraction of the particles will diffuse towards the beam pipe wall and be lost. In this event the particle energy is converted into heat in the surrounding material and this can "quench" the magnet out of its cold, superconducting state. A quench in any of the LHC superconducting magnets (there are more than 6,000 of them in the LHC ring) will disrupt machine operation for several hours! To avoid this a collimation system will catch the unstable particles before they can reach the beam pipe wall, so as to confine losses in well shielded regions far from any superconducting element. To design effective controls systems, safety engineers are using extremely advanced computer programmes to perform coupled mechanical-magnetic-thermal analyses of stresses induced by a quench.

Beam physics results so far

Since 2004, LHC@home has been distributing the programme SixTrack (running on Windows or Linux machines) which supports accelerator physicists simulating the proton beam stability of the Large Hadron Collider (LHC).

In a long study in 2005, the accelerator physicists at CERN have evaluated different parameters for the LHC machine using LHC@home, and the final design choices were based on the outcome of this study. Of particular importance was the selection of the beam crossing scheme in the experiments where the two beams collide. The results have been presented at the European Particle Accelerator Conference in Edinburgh (June 2006).

The LHC startup and commissioning requires a very careful operation of the machine and detailed procedures for this had to be established. During the installation of the LHC all machine elements have been tested and their properties recorded in a data base. The exact knowledge of these properties allows a simulation and optimization of the commissioning and operational scenarios for the LHC. In particular this is true for the final focusing (triplet) magnets which minimize the beam sizes for the most efficient collision.

Following the outstanding success of the previous simulation campaign, it was decided in 2007 to set up a new study programme using LHC@home to provide the necessary data for the scientists working on this challenging task.

In 2008 until 2010, the huge amount of magnetic measurements data have been used to simulate a more realistic model of the LHC as installed in the tunnel. This led to massive tracking campaigns aimed at studying the dynamic aperture in the various configurations used for the beam commissioning that was to start in September 2008.

So your participation in LHC@home really has helped building the LHC!

Beam physics results for the future

In 2011, the LHC is running providing data to the experimentalists. A unique chance is at hand to accelerator physicist to measure and simulate the beam dynamics in the LHC.

Furthermore, the LHC should deliver even more in the future! There is an approved project for a tenfold upgrade of the LHC performance by about 2026, the so-called High-Luminosity LHC. Once more, the beam dynamics of the upgraded machine will need to be studied with case and massive numerical simulations will be needed.

By joining LHC@home and subscribing to the SixTrack project you will help scientists to study these effects in much greater detail than was previously possible, and ensure that we understand the beam dynamics in the current LHC and we can prepare the upgrade runs as smoothly and efficiently as possible.

So your participation in LHC@home really will help building the High-Luminosity LHC!

Other results

Besides helping build the LHC, LHC@home has proved to be an invaluable, even essential, tool for investigating and solving fundamental problems with floating-point arithmetic. Different processors, even of the same brand, all conforming more or less to the IEE754 standard, produce different results, a problem further compounded by the different libraries provided for Linux and Windows. In particular, a major problem was the evaluation of the elementary functions, such as exponent and logarithm, which are not covered by the standard at all (apart from the square root function). This problem was solved by the use of the correctly rounded elementary function library (crlibm) developed by a group at the Ecole Nationale Supérieure in Lyon. Due to the often chaotic motion of the particles even a one unit of difference in the last digit of a binary number produces completely different results, as the difference grows exponentially as the particle circulates for up to one million turns round the LHC. Thus it is extremely difficult to find bugs or to verify new software and hardware platforms. This methodology has allowed us to detect failing hardware, otherwise unnoticed, and to find compiler bugs. With your help, it is being extended to ensure identical results on any IEE754 compliant processor with a standard compliant compiler (C++ or FORTRAN) which will allow us to work on any such system and even on Graphical Processing Units (GPUs).